The Frustration that Comes from Getting Scraped and Outranked

What is Scraped Content?

Scraped content is essentially content that is copied from one site and placed on another. Typically, content is scraped using an automated script (it's so much more efficient to steal content this way), than by copying it by hand. It's not unusual for all articles on a site to get scraped and placed on another site.

Why Do People Scrape Content and Display it?

Crawling and indexing pages like Google does is a form of scraping, but that's not what we are concerned with. We are concerned with people that take our content, place it on another site in the hopes of driving traffic to it via search engines. Scraping is such a low cost way of getting content, that all major scrapers need is for search engines to send them a trickle of traffic to make it financially viable.

When Does Scraping Become a Major Problem for Publishers?

Scraping becomes a major problem for publishers when search engines get the original source wrong for queries that sends traffic. When search engines get it right, it's not too much of a problem, but when they get it wrong, the scraped page will rank above the original content that they spent time and money producing that cannibalizes their potential audience and undermines their work.

Why Do Search Engines Get the Original Source Wrong?

There are a few major theories why search engines get the original source wrong. The first is that when content is newly published, a scraper picks it up and creates a page almost instantly. Then, Google indexes the page on the site that scraped it first. They deem the first finding of the page as the original and give it top billing in the search results.

The second major theory is that the site that hosts the scraped page has more authority than the site they scraped it from. For example, if CNN (theoretically) scraped your article from a newly created blog, and then drove a bunch of traffic and links to the page, that scraped page would have significantly more juice behind it and it would likely outrank the original. This can happen with legally syndicated content like Associated Press articles.

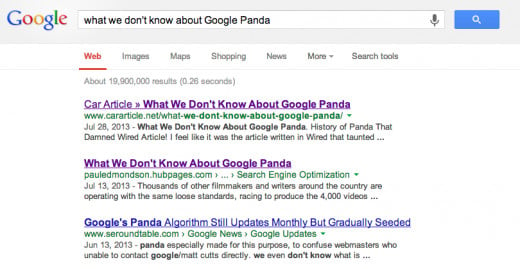

The third, is the most frustrating for everyday people. I'll use a recent example of my own site to illustrate the frustration. I wrote a post on Google Panda. My site (pauledmondson.hubpages.com) is full of unique content and all original by me. Perhaps, my writing isn't the best, but surely I don't deserve to be outranked by a scraper.

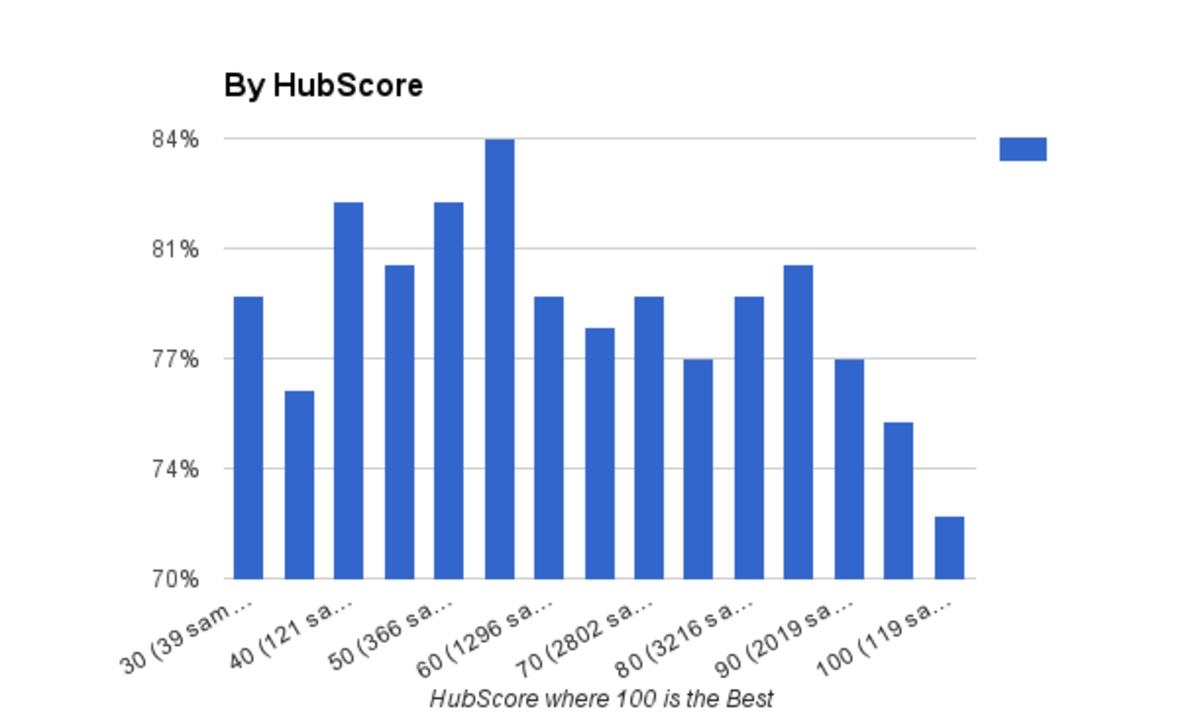

If you search Google for "what we don't know about Google Panda," you will see a site that ranks #1 above my site. Why did this happen? My theory is that Google decided my site was low quality. That the combination of my Hubs on search engines, bbq, and kids didn't fit what they saw as high quality. Over the last few Panda updates I lost a huge amount of my traffic from Google. When Google punishes a site with Panda or a penalty it suppresses the pages so much that a scraped copy of the page can outrank it. I really believe Google has it wrong with my site....Time will tell.

As a webmaster, there is no way for me to tell why Google demoted my site and is allowing scrapers to outrank my work.

What Can You Do About Scraped Content Outranking Original?

Here are a few options.

1. Post the example in Google Webmaster Forums. Leave an example of the query and your page. Make sure the scraper is actually outranking your content with specific queries. Hopefully, this will help google solve the issue.

2. File a DMCA request. You can do it via Google's wizard. You can also send a DMCA request to the other site following this guidelines.

3. If the scraper site is monetizing your content with ads, affiliate links or other means, you can contact the partners and let them know. It's possible that the monetization partner will cancel their accounts.

TechCrunch is Scraped, but Ranks Appropriately

Do I Need to Worry About All My Content Getting Scraped?

My recommendation is to focus on situations where your original content is getting outranked. There are services that will monitor your content and notify you of when it's duplicated on the web, but it can be a bit overwhelming.

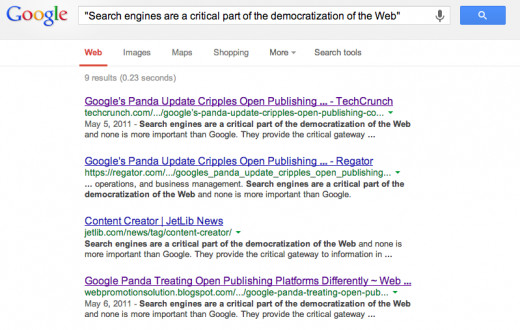

As an example, I did a guest post on Panda on Techcrunch. If you perform the search with omitted results included, you can see how many copies there are of the article. I don't think TC is too concerned because their original page is ranking first. Otherwise it would be very burdensome to file DMCA complaints for each infringing article since they're extensively scraped.